Elora Engine

Operator-governed AI runtime.

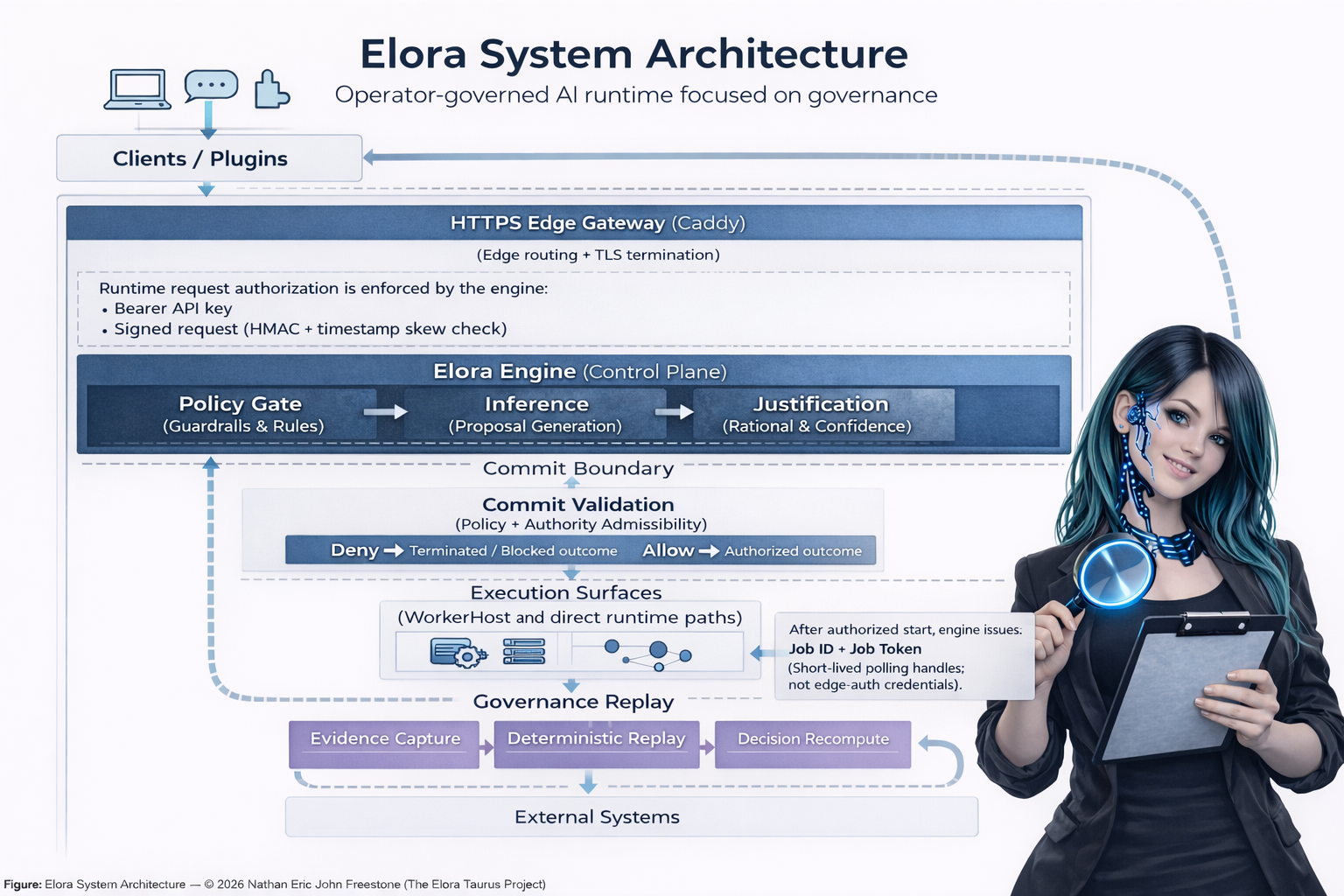

Elora is a UK-based independent project structured around a governance control plane. Inference generates proposals; authorization occurs at a deterministic commit boundary before delivery or execution.

Operator-governed AI runtime.

Elora is a UK-based independent project structured around a governance control plane. Inference generates proposals; authorization occurs at a deterministic commit boundary before delivery or execution.

Core flow from client request to governed execution outcome. Worker Fabric covers external action execution paths; direct response paths can complete without worker dispatch.

Clients / Plugins

|

v

Ingress / Edge Gateway

(Authenticated Access Points)

|

v

========================================

CONTROL PLANE

========================================

Elora Engine

(Control Plane Authority)

|

v

Policy Gate

(Guardrails & Rules)

|

v

Inference

(Proposal Generation)

|

v

Justification

(Confidence / Rationale Check)

------------ COMMIT BOUNDARY ------------

Commit Validation

(Policy + Authority Admissibility)

|

+--+---+

| |

v v

DENY ALLOW

Terminate Execute

---------- EXECUTION BOUNDARY ----------

Worker Fabric

(WorkerHosts / Agents)

----------------------------------------

GOVERNANCE REPLAY

(Evidence / Replay / Recompute)

Evidence Capture

|

Deterministic Replay

|

Decision Recompute

Governance evidence is captured on touch, not after, so every job is evidenced regardless of which point it fails.

Caddy provides TLS edge routing and controlled ingress paths. Request authorization is enforced by the engine using bearer API key plus signed request validation.

Model outputs are treated as candidate decisions, not authority.

Admissibility is evaluated against captured state at the commit boundary.

After an authorized start request, the engine issues short-lived job identifiers and tokens for status polling. These are scoped runtime handles, not edge-auth substitutes.

Operator surfaces explain why an outcome was allowed or blocked.